I’ve already written a few articles on the possibility of atmospheric air pressure change causing the temperature change over the ice age: (overview, calculations), this suggests that if the air pressure increased by 30% that would be enough on its own to cause the 8C warming we see coming out of an ice age.

It also means that for an ice-age cycle of around 80,000 years, the rate of drop in pressure (if pressure alone were the cause of all temperature change), would be 300mb/80,000 or 0.375mb/century.

Today I’ve just come across evidence showing long term pressure change in an old article in WUWT

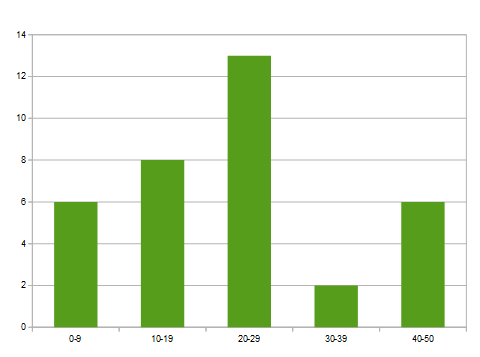

This shows a drop in pressure from 1008.4 to 1007.6 over 91 years or of 0.87mb/century which is sufficient to drop global temperature by the 8C that occurs over an ice-age cycle in around 40,000 years.

This is now the first concrete evidence that we are part of a long term decline in atmospheric pressure which in turn will lead to a long term decline in temperature – until that is, the earth sees the triggering of another caterpillar event – whereupon the small increase in temperature from a change in the orbital cycle will lead to yet another “runaway global warming event” that will take the planet out of the next ice age and into the next interglacial.

Whereupon, the warming is brought to a “hard stop”, so that further warming is all but impossible.